|

This chapter is more mathematically involved than the rest of thebook. Today, thebackpropagation algorithm is the workhorse of learning in neuralnetworks. That paper describes severalneural networks where backpropagation works far faster than earlierapproaches to learning, making it possible to use neural nets to solveproblems which had previously been insoluble. The backpropagation algorithm was originally introduced in the 1970s,but its importance wasn't fully appreciated until a famous 1986 paper by David Rumelhart, Geoffrey Hinton, and Ronald Williams.

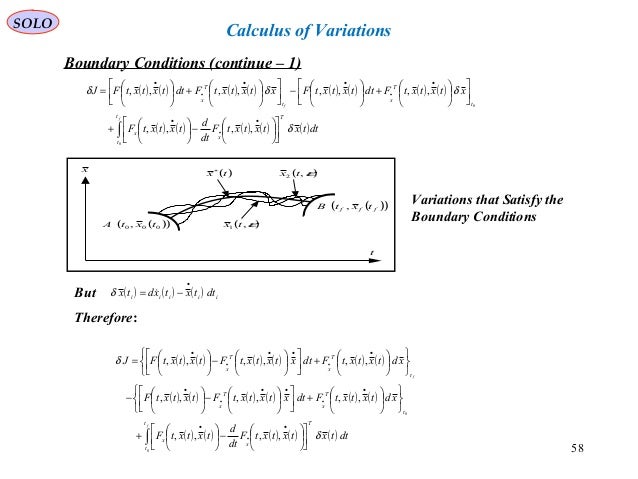

That's quite a gap! Inthis chapter I'll explain a fast algorithm for computing suchgradients, an algorithm known as backpropagation. In the last chapter we saw how neural networks canlearn their weights and biases using the gradient descent algorithm.There was, however, a gap in our explanation: we didn't discuss how tocompute the gradient of the cost function. Goodfellow, Yoshua Bengio, and Aaron Courville Michael Nielsen's project announcement mailing list Thanks to all the supporters who made the book possible, withĮspecial thanks to Pavel Dudrenov. Their correspondence ultimately led to the calculus of variations, a term coined by Euler himself in 1766.Deep Learning Workstations, Servers, and Laptops Both further developed Lagrange's method and applied it to mechanics, which led to the formulation of Lagrangian mechanics. Lagrange solved this problem in 1755 and sent the solution to Euler. This is the problem of determining a curve on which a weighted particle will fall to a fixed point in a fixed amount of time, independent of the starting point.

The Euler–Lagrange equation was developed in the 1750s by Euler and Lagrange in connection with their studies of the tautochrone problem. In classical field theory there is an analogous equation to calculate the dynamics of a field. It has the advantage that it takes the same form in any system of generalized coordinates, and it is better suited to generalizations. This is particularly useful when analyzing systems whose force vectors are particularly complicated. In classical mechanics, it is equivalent to Newton's laws of motion indeed, the Euler-Lagrange equations will produce the same equations as Newton's Laws. In this context Euler equations are usually called Lagrange equations. In Lagrangian mechanics, according to Hamilton's principle of stationary action, the evolution of a physical system is described by the solutions to the Euler equation for the action of the system. This is analogous to Fermat's theorem in calculus, stating that at any point where a differentiable function attains a local extremum its derivative is zero. The equations were discovered in the 1750s by Swiss mathematician Leonhard Euler and Italian mathematician Joseph-Louis Lagrange.īecause a differentiable functional is stationary at its local extrema, the Euler–Lagrange equation is useful for solving optimization problems in which, given some functional, one seeks the function minimizing or maximizing it. In the calculus of variations and classical mechanics, the Euler–Lagrange equations are a system of second-order ordinary differential equations whose solutions are stationary points of the given action functional. Second-order partial differential equation describing motion of mechanical system

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed